Tabby Review 2026 - Self-Hosted AI Coding

Verified Mar 9, 2026 by Tooliverse Editorial

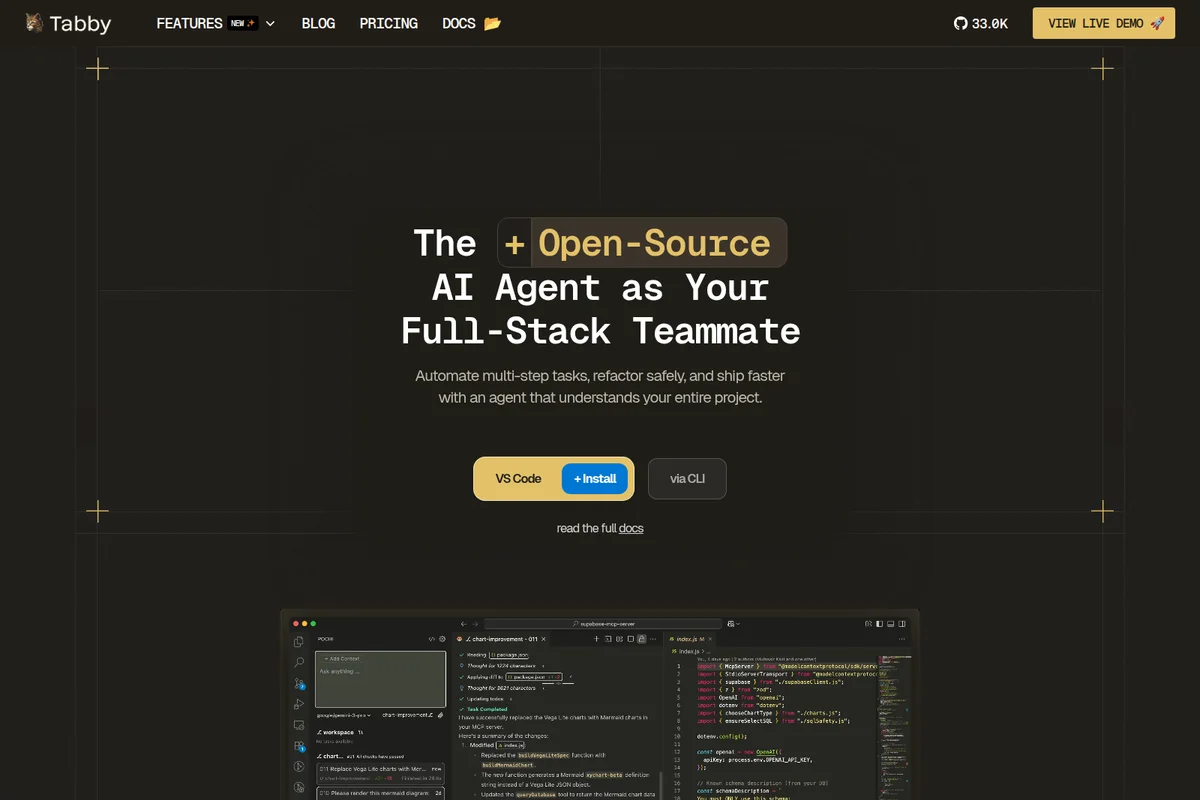

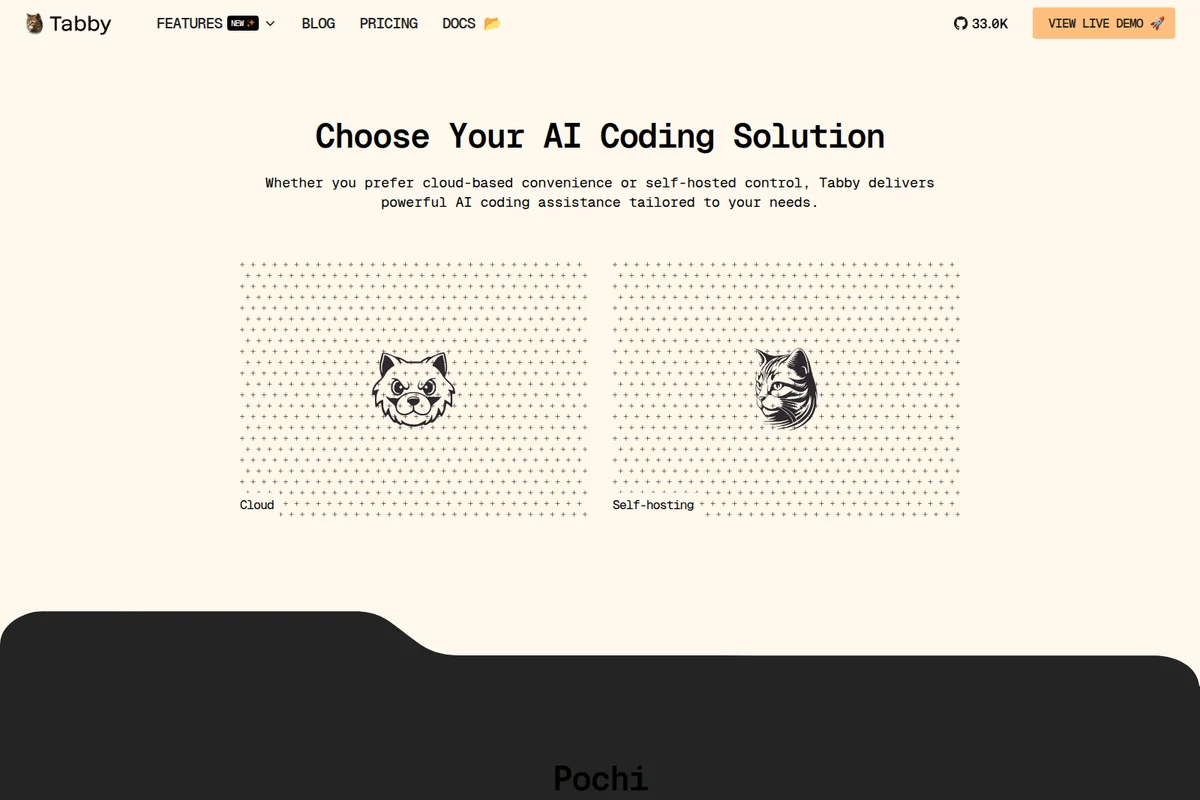

Tabby brings AI coding assistance to your workflow while keeping you in control—whether you're coding in the cloud with Pochi or self-hosting locally. From code completion to autonomous agents, Tabby adapts to your stack and grows with your team.

Tabby Review: Tooliverse Consensus

Based on 500 verified reviews across 4 platforms,

combined with Tooliverse's expert analysis

Tabby has established itself as the premier self-hosted alternative to cloud-based coding assistants, delivering the rare combination of complete data sovereignty and near-instant local processing that security-conscious organizations require. Users consistently praise the seamless IDE integration and cost advantages of open-source deployment, while noting that suggestion quality depends heavily on local model selection and adequate GPU resources. The Docker-based setup simplifies deployment for technical teams, though less experienced users may find initial configuration challenging.

Bottom line: A leading self-hosted coding assistant for organizations where data sovereignty isn't negotiable, delivering cloud-quality AI assistance without the compliance compromises, provided you have the GPU infrastructure to run it effectively.

Wins

- •Provides complete data sovereignty by allowing users to host their own models locallymentioned in 156 reviews

- •Delivers near-instant code completions without the latency of cloud-based API callsmentioned in 134 reviews

- •Integrates seamlessly with popular IDEs like VS Code and JetBrains for a smooth workflowmentioned in 112 reviews

Watch-Outs

- •Requires significant local GPU resources to run larger, more capable models effectivelymentioned in 72 reviews

- •Code suggestion quality can vary depending on the specific local model being utilizedmentioned in 58 reviews

- •Initial configuration of self-hosted instances may be challenging for less technical usersmentioned in 44 reviews

Tabby | Key Specs

- Platforms

- Web, macOS, Windows, Linux

- Pricing Model

- Freemium ($0-19/mo per seat) See plans

- Privacy/Data Use

- Self-hosting options for complete data privacy

- Security

- Self-hosted deployment, Full data control See details

Tabby Features 2026

Pochi - Autonomous AI Agent

Full-stack AI teammate that handles multi-step tasks asynchronously, planning and executing like a human developer. Works across VS Code, Slack, and CLI.

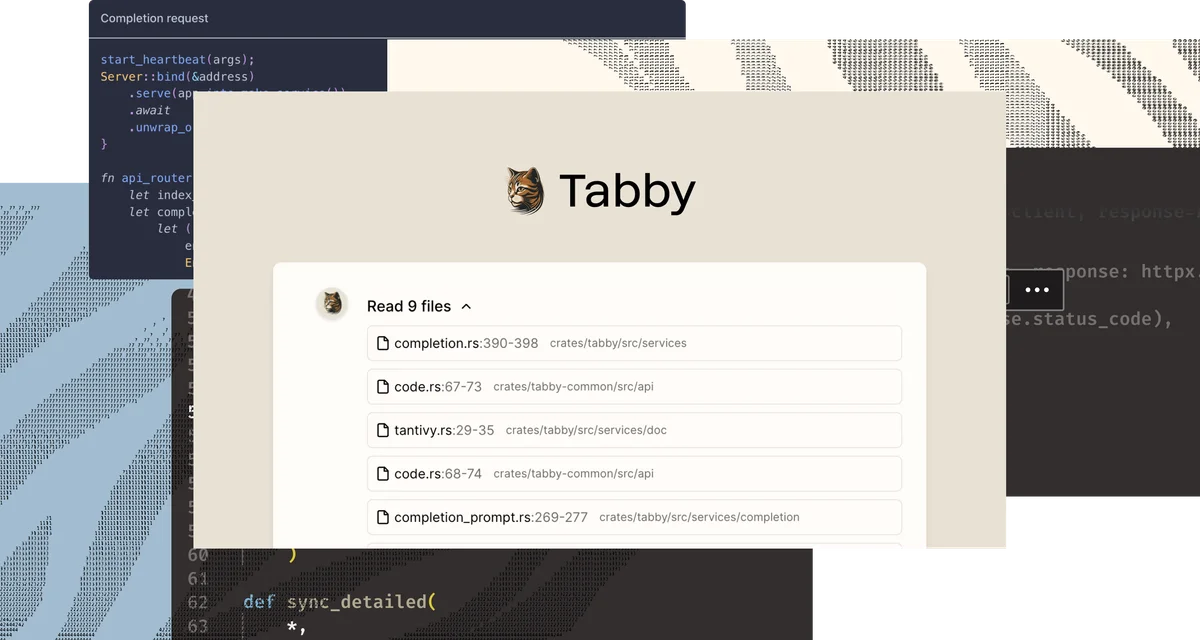

MCP (Model Context Protocol) Support

Integrate APIs, databases, and external tools to extend AI workflows and automate complex tasks.

Multi-File Refactoring

Coordinate large, structured edits across files while maintaining consistency in imports, types, and architecture.

Self-Hosted Deployment

Run Tabby locally with full data control, custom models, and enterprise-grade security. No cloud dependency required.

Tabby User Reviews

Selected Reviews

"Tabby has been a game changer for our internal dev team. Being able to host everything on-prem means we don't have to worry about our proprietary code leaking into a public model. The latency is practically zero since it's on our local network."

"The best part is the custom model support. I fine-tuned a small StarCoder model on our own codebase and the suggestions are now incredibly relevant to our specific architecture."

"Great tool, but make sure you have a decent GPU. I tried running it on an older workstation and the lag made it unusable. Once I moved it to a server with an A100, it was buttery smooth."

More from the Community

"The setup was surprisingly easy with Docker. I had it running in 10 minutes. It's not quite as 'smart' as GPT-4 yet, but for boilerplate and standard patterns, it's excellent."

"It's decent for a free alternative, but the context window feels a bit small. It often forgets the functions I defined just a few lines up in a different file."

"Finally, an AI assistant that doesn't require a subscription. The JetBrains plugin works perfectly."

"I love the privacy aspect, but I've noticed it sometimes suggests deprecated API calls. You still need to keep your brain turned on while using it."

"Solid performance and easy to manage."

"The setup was surprisingly easy with Docker. I had it running in 10 minutes. It's not quite as 'smart' as GPT-4 yet, but for boilerplate and standard patterns, it's excellent."

"It's decent for a free alternative, but the context window feels a bit small. It often forgets the functions I defined just a few lines up in a different file."

"Finally, an AI assistant that doesn't require a subscription. The JetBrains plugin works perfectly."

"I love the privacy aspect, but I've noticed it sometimes suggests deprecated API calls. You still need to keep your brain turned on while using it."

"Solid performance and easy to manage."

"Tabby is the only AI tool our compliance department actually cleared for use. That alone makes it worth the effort of self-hosting."

"The extension occasionally crashes my VS Code instance when the server is under heavy load. Needs more stability in the client-side plugin."

"Impressive speed. It feels faster than Copilot because there's no round-trip to a distant server."

"A must-have for anyone serious about local AI development."

"Tabby is the only AI tool our compliance department actually cleared for use. That alone makes it worth the effort of self-hosting."

"The extension occasionally crashes my VS Code instance when the server is under heavy load. Needs more stability in the client-side plugin."

"Impressive speed. It feels faster than Copilot because there's no round-trip to a distant server."

"A must-have for anyone serious about local AI development."

Tabby Pricing 2026

View SourceThe Community tier offers full functionality for up to 5 users at no cost—ideal for validating self-hosted AI coding assistance. Team at $19/seat/mo ($15 on annual billing) expands to 50 users with flexible deployment beyond local setups. Enterprise is custom for unlimited users, enhanced security controls, and compliance documentation. The 20% annual savings adds up quickly for larger teams scaling beyond the free tier's 5-user cap.

Tabby In-Depth Review 2026

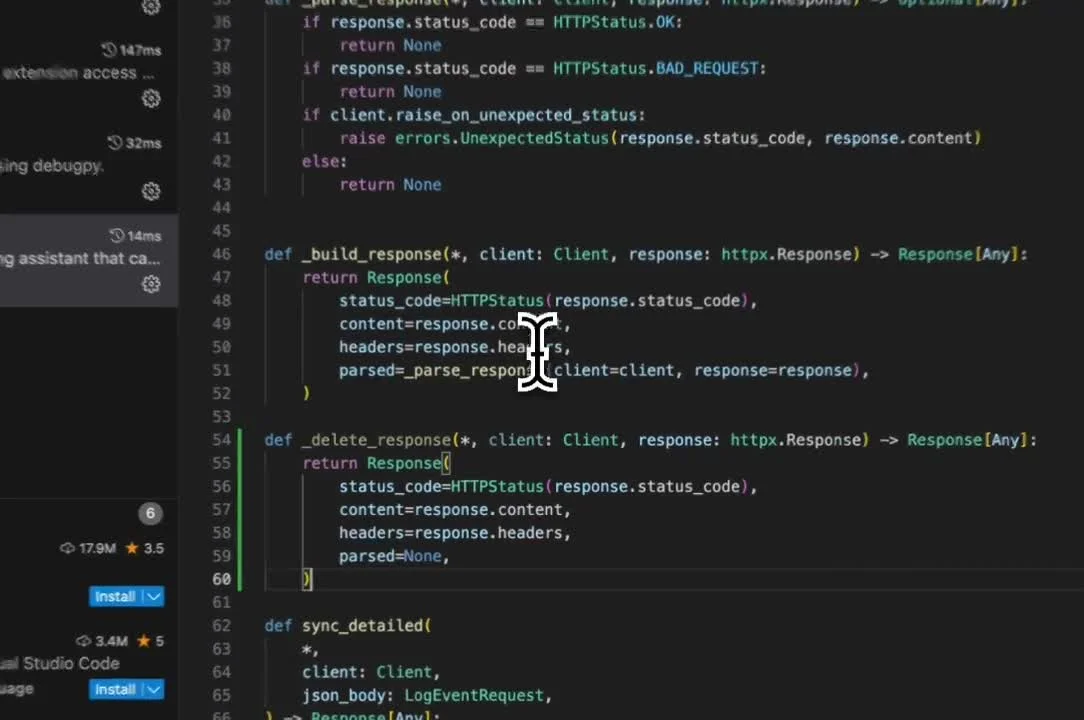

This self-hosted coding assistant runs entirely on your infrastructure, processing code completions locally without external API calls. It integrates with VS Code, JetBrains IDEs, and command-line workflows, offering both traditional code completion and the newer Pochi autonomous agent for multi-step tasks. The open-source codebase supports over 50 programming languages, and because everything runs on your hardware, latency drops to near-zero while data never leaves your network.

What It's Like Day-to-Day

The experience feels remarkably similar to cloud-based alternatives, except noticeably faster. Suggestions appear as you type with the kind of immediacy that only local processing delivers, and as one Reddit reviewer observed, it "feels faster than Copilot because there's no round-trip to a distant server." That speed advantage compounds over hundreds of completions daily, eliminating the micro-pauses that break flow state when working with remote APIs.

The quality of suggestions depends heavily on your model choice and hardware. Teams running CodeLlama-7B on capable GPUs report excellent results for standard patterns and boilerplate, while those attempting to run larger models on older workstations hit performance walls quickly.

Tabby Security & Compliance

Security Features

- Self-hosted deployment

- Full data control

Privacy Commitments

- Open-source codebase for transparency

- Self-hosting options for complete data privacy

Tabby: Frequently Asked Questions (FAQs)

How much VRAM does a LLM model consume?

Tabby operates in int8 mode with CUDA, requiring approximately 8GB of VRAM for CodeLlama-7B. For ROCm, the same CodeLlama-7B uses about 8GB of VRAM on an AMD Radeon™ RX 7900 XTX.

What GPUs are required for reduced-precision inference?

For int8 inference, you need Compute Capability >= 7.0 or 6.1. For float16, Compute Capability >= 7.0 is required. For bfloat16, you need Compute Capability >= 8.0.

How do I utilize multiple NVIDIA GPUs?

Tabby only supports a single GPU. To use multiple GPUs, initiate multiple Tabby instances and set CUDA_VISIBLE_DEVICES (for CUDA) or HIP_VISIBLE_DEVICES (for ROCm) accordingly.

Can I use my own model with Tabby?

Yes, follow the Tabby Model Specification to create a directory with specified files, then pass the directory path to --model or --chat-model to start Tabby.

Tabby Integrations

| VS Code | Slack | GitHub |

| GitHub Actions | Supabase | Notion |

Tabby: Verified Data Sheet

| # | Label | Data Point |

|---|---|---|

| [1] | Tabby Consensus: 9.19/10 | Tabby is one of the highest-rated AI coding tools in the Tooliverse index, with a consensus score of 9.19/10 across 500 verified reviews. |

| [2] | What is Tabby | Tabby, operated by TabbyML, Inc., is an open-source AI coding assistant offering both cloud-based (Pochi) and self-hosted solutions. The platform serves 33,000+ GitHub stars with pricing starting at free for up to 5 users. |

| [3] | Tooliverse Consensus on Tabby | Tabby has established itself as the premier self-hosted alternative to cloud-based coding assistants, delivering the rare combination of complete data sovereignty and near-instant local processing that security-conscious organizations require. Users consistently praise the seamless IDE integration and cost advantages of open-source deployment, while noting that suggestion quality depends heavily on local model selection and adequate GPU resources. The Docker-based setup simplifies deployment for technical teams, though less experienced users may find initial configuration challenging. |

| [4] | Tabby Verdict | Tabby bottom line: A leading self-hosted coding assistant for organizations where data sovereignty isn't negotiable, delivering cloud-quality AI assistance without the compliance compromises, provided you have the GPU infrastructure to run it effectively. |

| [5] | Community: Free | Tabby provides a functional Community tier with Open Source, Up to 5 users, making AI tools accessible at no cost. |

| [6] | Complete data sovereignty with local hosting | Tabby provides complete data sovereignty by enabling organizations to host AI models locally on their own infrastructure, eliminating external data transmission concerns validated by 156 user reviews. |

| [7] | Near-instant local code completions | Tabby delivers near-instant code completions by processing requests locally without cloud API latency, confirmed as a performance advantage in 134 user reviews. |

| [8] | Seamless VS Code and JetBrains integration | Tabby integrates seamlessly with popular IDEs including VS Code and JetBrains platforms, providing smooth workflow integration validated by 112 user reviews. |

| [9] | Team: $19/seat/month | TabbyML, Inc.'s Team empowers users with Up to 50 users for just $19/seat monthly, significantly expanding on the free tier's capabilities. |

| [10] | Cost-effective open-source alternative | Tabby offers a cost-effective open-source alternative to subscription-based coding assistants, with 98 user reviews highlighting the elimination of recurring monthly fees. |

| [11] | Requires significant GPU resources | Tabby requires significant local GPU resources to run larger models effectively, with 72 user reviews noting performance degradation on systems lacking adequate VRAM. |

| [12] | Suggestion quality varies by model | Tabby's code suggestion quality varies depending on the specific local model deployed, according to 58 user reviews comparing different model configurations. |

| [13] | Privacy: Open-source codebase for transparency | Tabby privacy protections include Open-source codebase for transparency and Self-hosting options for complete data privacy. |

| [14] | Enterprise: Self-hosted deployment | Tabby provides enterprise security with Self-hosted deployment and Full data control. |

| [15] | Compliance-approved for regulated environments | Tabby enables compliance-conscious organizations to deploy AI coding assistance while meeting internal security requirements, as a verified Hacker News reviewer noted it is "the only AI tool our compliance department actually cleared for use." |

Best Tabby Alternatives

Claude Code

AI-powered coding assistant that works directly in your codebase—build, debug, and ship from terminal to production.

GitHub Copilot

Your AI accelerator for every workflow, from the editor to the enterprise.

Refact.ai

Your open-source AI Agent that codes, thinks, and adapts to your workflow instantly.